For all symbols are fluxional; all language is vehicular and transitive, and is good, as ferries and horses are, for conveyance, not as farms and houses are, for homestead.

—Ralph Waldo Emerson, The Poet

In part because I am not satisfied with the conclusion of my thinking about social media, and because the conversation about social media platforms has been peaking in the news cycle, I am writing a third post in what is most likely my ill-fated mini-series, Why I don’t use Twitter.

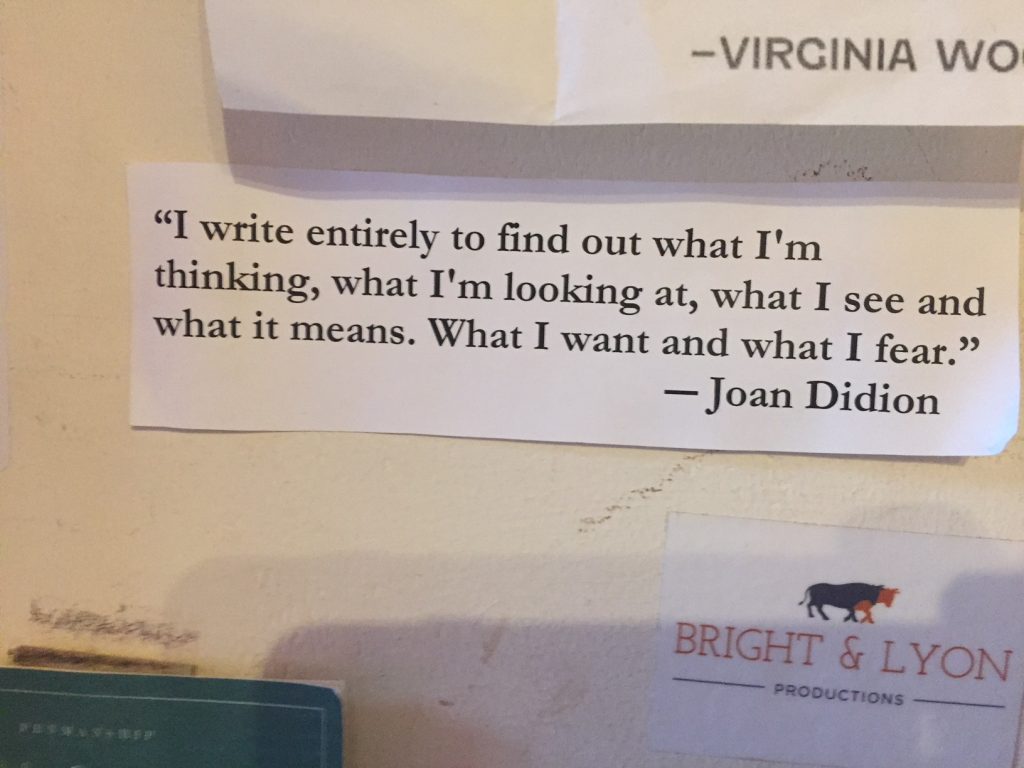

My decision not to use Twitter, as I have explained from the beginning, is that I don’t have the time. I am in love with language, and what language lovers call discourse; and I devote a good deal of time to reading and writing—reviews and commentaries, articles and essays, and books. And, for over ten years, I have been writing on web logs. My practice writing on blogs has modulated between sharing what I have experienced or might know, delivering something to a reader, and seeking or acquiring understanding of one thing or another. Writing helps me figure things out (or not), including what I think, at best on the way to discovering what my thinking might actually mean. The other reason I have chosen not to use Twitter is rooted in a deeper concern about the particular ways the platform shapes forms of expression and social engagement.

Over many years, I have used blogs and wikis, feeds that aggregate content, and social media. Indeed I can say without hesitation that my personal and professional life has become richer as a result of the social and cultural changes digital technologies make possible. It has been breathtaking, to put it another way, to be engaged in literacy and education as digital technologies have proliferated. My doctoral work centered around theories of inquiry, and how the literary activities of reading, thinking, and writing have constituted democratic literacy and culture. And working in a writing center and co-directing a large university writing program put teaching and pedagogy at the center of my intellectual development during graduate school. Sure, my mind was entangled in the beautiful intricacies of intellectual history, theory and criticism, and poetry and poetics; at the same time, I was deeply engaged with teachers and students in heady conversations about teaching and learning–and technology.

The tidal flow of technology felt inevitable: typing up a research project using a word processor for the first time; the first experiences with the graphical user interfaces that began replacing the MS-DOS text commands typed on a keyboard, such as “dir” to list the files in a directory and “del” to delete a file, all taking place with students in the “computer-integrated classroom” in which I volunteered to teach; the department bulletin boards, the local area networks, electronic mail; file transfer protocol, html, web browsers, and the web log; then the proliferation of web 2.0 applications, Friendster and LinkedIn and MySpace, Facebook and Twitter, Google+ and then later applications such as Snapchat and Instagram; video sharing platforms like YouTube and music services such as Spotify; and the integration of live stream technologies in Facebook and Twitter.

When I began working full-time as an assistant professor of English digital technologies had taken shape in teaching and learning management systems. Moodle, Blackboard, and Canvas were presented to educational institutions as products to manage learning through a modern conception of system management. The history of learning management is a history that includes Frederick Winslow Taylor and the Midvale Steel Works in Pennsylvania, specifically his 1911 treatise Principles Of Scientific Management in which the systematic laws, rules, and principles to improve manufacturing processes sound all too familiar in an age of standardized testing and learning assessment regimes designed to manage and improve educational outcomes. “In the past Man has been first, in the future the system must be first,” Taylor asserts in his brief introduction.

The design and uses of digital platforms are haunted by Taylor’s vision. The learning management system, or LMS, put the system at the center of learning: it encouraged the use of so-called best practices, created efficiencies for teachers, standardized learning, and facilitated pathways for knowledge transfer. These proprietary systems are designed to monitor student learning in and across courses as well, creating increasingly flexible and “responsive” learning environments. Teachers and administrators have readily incorporated the LMS into their classrooms and institutions. And, if you read Taylor, it is not difficult to understand how the LMS has taken hold. But in this acceptance of the system–that is, once the domain of management becomes the conceptual framework for the domain of teaching and learning–then it becomes all the more difficult to work with a different metaphor. The concept becomes inseparable from the technological and administrative routines of the institution. Teaching and learning is management.

My point is that conceptual metaphors determine the way we think–in this case, how we think about and experience the Internet through the various networks of web pages and sites and services we call the web. To describe the Internet in terms of a stream rather than a network, to take another example, normalizes the increasingly sophisticated algorithms that stream information and news, to provide users more of what they like, and that conveniently construct a personalized information stream.

Nicholas Carr offers a parallel commentary on the metaphors we use when we are thinking about data. As he explains, the terms “mining” and “extraction” are indicators of a conceptual metaphor that determines the limits of our thinking. The problem, he explains, is that data “does not lie passively within me, like a seam of ore, waiting to be extracted”:

Rather, I actively produce data through the actions I take over the course of a day. When I drive or walk from one place to another, I produce locational data. When I buy something, I produce purchase data. When I text with someone, I produce affiliation data. When I read or watch something online, I produce preference data. When I upload a photo, I produce not only behavioral data but data that is itself a product. I am, in other words, much more like a data factory than a data mine. I produce data through my labor — the labor of my mind, the labor of my body.

Carr then turns to Taylor, which surprised me at first, for I had made the connection to Taylor in my attempt to make sense of the LMS. But then my surprise turned to a wider recognition:

The platform companies, in turn, act more like factory owners and managers than like the owners of oil wells or copper mines. Beyond control of my data, the companies seek control of my actions, which to them are production processes, in order to optimize the efficiency, quality, and value of my data output (and, on the demand side of the platform, my data consumption). They want to script and regulate the work of my factory — i.e., my life — as Frederick Winslow Taylor sought to script and regulate the labor of factory workers at the turn of the last century. The control wielded by these companies, in other words, is not just that of ownership but also that of command. And they exercise this command through the design of their software, which increasingly forms the medium of everything we all do during our waking hours.

Once conceptual frameworks become visible we are able to imagine other ways of describing the problem. “The factory metaphor makes clear what the mining metaphor obscures,” Carr explains. “We work for the Facebooks and Googles of the world, and the work we do is increasingly indistinguishable from the lives we lead. The questions we need to grapple with are political and economic, to be sure. But they are also personal, ethical, and philosophical.”

Personal. Ethical. Philosophical. . . . On the one hand, the digital tools are useful for building and sustaining a democratic culture–for sharing information, accessing new dimensions of experience, building community, and bringing people together for forms of social engagement and action. At the same time, these platforms require user consent—to the collection, transfer, storage, manipulation, and disclosure of user information. In Pedagogy and the Logic of Platforms Chris Gilliard explains how

a web based on surveillance, personalization, and monetization works perfectly well for particular constituencies, but it doesn’t work quite as well for persons of color, lower-income students, and people who have been walled off from information or opportunities because of the ways they are categorized according to opaque algorithms

Questions about how the web works are always already questions about who the web works for. And the implications for educators should be clear. For when “persistent surveillance, data mining, tracking, and browser fingerprinting” become normative practices we easily overlook the same strategies at work in the learning management systems at work in the digital infrastructures of our colleges and universities.

An article by John Hermann, a technology reporter for the New York Times, offers a useful description of the business model that has determined the design and use of these platforms in the digital ecosystem—that is, the tools we use to express ourselves and to connect with others:

Since 2012, online platforms have moved to the center of hundreds of millions more lives, popularizing their particular brands of social surveillance. Services like Facebook and Twitter and Instagram are inextricably tied to the experience of being monitored by others, which, if it doesn’t always produce “prosocial” behavior in the broad psychological sense, seems to have encouraged behaviors useful to the platforms themselves—activity and growth.

The model is successful precisely because it is predicated on expanding, on finding “new ways to monetize the powerful twin sensations of seeing and being seen by others.” These sensations have become a distinct feature of digital experience—so much so, in fact, that they have become ubiquitous beyond social networks as well. If one reads digital editions of New York Times or the Los Angeles Times, for example, retargeted ads appear in margins or the middle of articles. As these modes of surveillance become more visible we might consider that we are being watched and that we have agreed to being watched.

The broader consequences of accepting the terms of this agreement, of seeing and being seen, are, Hermann claims, “a social-media ecosystem that has annexed the news and the public sphere.” Indeed it is unsettling when one becomes aware of the “nascent but increasingly assertive systems of identity and social currency that seek to transcend borders while answering only to investors.” But what really concerns me are the constitutive features of the experiences we identify in terms of identity and social exchange; for as Hermann explains, “having constructed entire modes of interaction, consumption and identity verification that are now intimately interwoven with our lives,” these modes become so all-encompassing that they’ve practically become invisible. In fact, to “stop using these products,” Hermann concludes, “is to leave the Internet, and these companies made it their mission to make sure there isn’t anywhere else to go. Of course, this is the deal we have entered into with such services: our data for their products.”

The question social media platforms such as Twitter raise have everything to do with what we mean by community: for social media platforms shape the freedom to define community through surveillance technologies. The invisible but intricate tools for online data collection are monetized for sure. But as April Glaser points out in an article on digital privacy in Slate, “corporate data collection feeds into government surveillance—and it hits people in real ways, too.” And, in a provocative piece that should be required reading for anyone “building communities” online, Carina Chocano’s What Good is ‘Community’ when someone Else Makes all the Rules?

The digital platforms where we fall into all our different groups make us a similar offer, presenting the communities they host as rich, human-built spaces where we can gather, matter, have a voice and feel supported. But their promise of community masks a whole other layer of control — an organizing, siphoning, coercive force with its own private purposes. This is what seems to have been sinking in, for more of us, over the past months, as attention turns toward these platforms and sentiment turns against them.

This is precisely what I have been arguing about the bargain we negotiate when we participate or, through our teaching, invite students to build community online. By framing the use of social media platforms in these terms, especially when using these platforms for teaching and learning, we acknowledge the dilemma.

As anyone who has actually read Gramsci’s The Prison Notebooks will tell you, hegemonies exist. More importantly, consent (or resistance) to a coercive cultural regime or institution does not preclude acknowledging that cultural regimes and/or institutions can also do good. More importantly, these structures are by definition fluid, and so can change.

The questions, then, may be more large, terrifying, and unpredictable: is social media good or bad for democracy? This question is posed in a January 2018 commentary posted in the Facebook Newsroom, of all places, by a professor of law, Cass R. Sunstein:

On balance, the question of whether social media platforms are good for democracy is easy. On balance, they are not merely good; they are terrific. For people to govern themselves, they need to have information. They also need to be able to convey it to others. Social media platforms make that tons easier.

There is a subtler point as well. When democracies are functioning properly, people’s sufferings and challenges are not entirely private matters. Social media platforms help us alert one another to a million and one different problems. In the process, the existence of social media can prod citizens to seek solutions.

Sunstein’s commentary Is Social Media Good or Bad for Democracy? is part of a series called Hard Questions: Social Media and Democracy comprised of an introduction by Samidh Chakrabarti of Facebook’s civic-engagement team, Sunstein’s essay, as well as essays by Toomas Hendrik Ilves, the former president of Estonia, and Ariadne Vromen, a professor of political participation at the University of Sydney.

As Sunstein concludes, “Social media platforms are terrific for democracy in many ways, but pretty bad in others. And they remain a work-in-progress, not only because of new entrants, but also because the not-so-new ones (including Facebook) continue to evolve.” As such, may be room for imagining new uses, hacking, or performative interventions: for “they all are imitable, all alterable; we may make as good; we may make better,” to borrow apposite terms from Emerson’s commentary on social and political institutions. For the most part I find these kinds of ameliorative outlooks quite congenial.

Though such an outlook in no way resolves the ethical dilemma facing any person who makes use of social media platforms in their current forms. Indeed, to ask a student to create any online presence, or to use any online tool, is a personal, ethical, and philosophical choice: it is a form of consent to both the good and the bad–the ideal and the reality of democratic life.

“What would the web look like if surveillance capitalism, information asymmetry, and digital redlining were not at the root of most of what students do online?” asks Gilliard. In part because we do not really know the answer, “when we use the web now, when we use it with students, and when we ask students to engage online, we must always ask: What are we signing them up for?” The asymmetrical relationship each of us has with digital platforms is a consequence of the powerful economic forces that structure the web.

In the end, Gilliard’s ethical questions are the questions I am left asking:

Technology platforms (e.g., Facebook and Twitter) and education technologies (e.g., the learning management system) exist to capture and monetize data. Using higher education to “save the web” means leveraging the classroom to make visible the effects of surveillance capitalism. It means more clearly defining and empowering the notion of consent. Most of all, it means envisioning, with students, new ways to exist online.

The use of social media platforms in the classroom, and the use of learning management systems, are choices we make. They are ethical choices. And they are choices that have everything to do with the ways we have chosen to define digital identity, fluency, and citizenship.

~~~~

Further Reading on Social Media, Digital Ethics, Education, and Democracy

This post is one of three in my mini series that begins with Why I Don’t Use Twitter. The second Post is More on Twitter and the third post is Seeing and Being Seen. Should you be interested in further reading, below is an incomplete and unscholarly reading list—some of the material I was reading as I was writing these posts.

Carina Chocano What Good is ‘Community’ when Someone Else Makes all the Rules?

Carr, Nicholas. I am a data factory (and so are you)

Collier, Amy Digital Sanctuary: Protection and Refuge on the Web?

Cottom, Tressie McMillan Digital Redlining After Trump: Real Names + Fake News on Facebook

Dwoskin, Elizabeth and Tony Romm Facebook’s rules for accessing user data lured more than just Cambridge Analytica

Eubanks, Virginia Want to Predict the Future of Surveillance? Ask Poor Communities

Gilliard, Chris Pedagogy and the Logic of Platforms and Digital Redlining, Access, and Privacy

Glaser, April The Dogs that Didn’t Bite

Hermon, John Cambridge Analytica and the Coming Data Bust

Johnson, Jeffrey Alan Structural Justice in Student Analytics, or, the Silence of the Bunnies

Kim, Dorothy The Rules of Twitter

Leetalu, Kalev Geofeedia Is Just The Tip Of The Iceberg: The Era Of Social Surveillence

Locke, Matt How Like Went Bad

Luckerson, Victor The Rise of the Like Economy

McKenzie, Lindsay The Ethical Social Network

Noble, Safiya Umoja Algorithms of Oppression: How Search Engines Reinforce Racism

Noble, Safiya Umoja and Sarah T. Roberts Engine Failure

Shaffer, Kris Closing Tabs, Episode 3: Teaching With(out) Social Media and Twitter is Lying to You

Stoller, Matt Facebook, Google, and Amazon Aren’t Consumer Choices. They are Monopolies That Endanger American Democracy

Stommel, Jesse The Twitter Essay

Walker, Leila Beyond Academic Twitter: Social Media and the Evolution of Scholarly Publication

Watters, Audrey Selections from Hackeducation

Zeide, Elana The Structural Consequences of Big Data-Driven Education

~~~~

Nota bene: If you have been reading my blog of late you might wonder whether I had just discovered the writing of Ralph Waldo Emerson. What is actually the case is that I have spent the past two years with his writing, as well as with the history of commentary on his works, and a good deal of my unscheduled hours in March and April copyediting the proofs—and now compiling the index—for our forthcoming book of essays on teaching Emerson.

![Panel Discussion[DoPy] (9)](http://thefarfield.org/wp-content/uploads/2014/11/panel-discussiondopy-9.jpg?w=1250&h=842)

![International_Conference[DoPy] (6)](http://thefarfield.org/wp-content/uploads/2014/10/international_conferencedopy-6.jpg?w=660)

I began writing about American poetry with two peer-reviewed journal articles: one on the poet Denise Levertov and one on William Carlos Williams. In 2004, my work on Williams continued with “Ideas as Forms of Beauty: William Carlos Williams’s Paterson and A. R. Ammons’s Tape for the Turn of Year,” an essay that appeared in the book Rigor of Beauty: Essays in Commemoration of William Carlos Williams. More recently, my work in American poetry and poetics has contributed to the emerging field of ecopoetry. My essay “William Carlos Williams, Ecocriticism, and Contemporary American Poetry” appeared in the book Ecological Poetry: A Critical Introduction. Two additional essays were published this fall: a 10,000 word overview of the life and writing of A. R. Ammons, commissioned by the Dictionary of Literary Biography, and a 10,000 word critical history of the relationship between poetry and ecology that appeared in a multi-volume anthology entitled Reading in Contemporary America. In addition, since 2003, I’ve published shorter reference entries on the American poets William Carlos Williams, Theodore Roethke, Mary Oliver, W. S. Merwin, Gary Snyder, and Denise Levertov. I also regularly review new books of American poetry for ISLE: Interdisciplinary Studies in Literature and Environment. My most recent review is Mary Oliver’s book of poetry Thirst.

I began writing about American poetry with two peer-reviewed journal articles: one on the poet Denise Levertov and one on William Carlos Williams. In 2004, my work on Williams continued with “Ideas as Forms of Beauty: William Carlos Williams’s Paterson and A. R. Ammons’s Tape for the Turn of Year,” an essay that appeared in the book Rigor of Beauty: Essays in Commemoration of William Carlos Williams. More recently, my work in American poetry and poetics has contributed to the emerging field of ecopoetry. My essay “William Carlos Williams, Ecocriticism, and Contemporary American Poetry” appeared in the book Ecological Poetry: A Critical Introduction. Two additional essays were published this fall: a 10,000 word overview of the life and writing of A. R. Ammons, commissioned by the Dictionary of Literary Biography, and a 10,000 word critical history of the relationship between poetry and ecology that appeared in a multi-volume anthology entitled Reading in Contemporary America. In addition, since 2003, I’ve published shorter reference entries on the American poets William Carlos Williams, Theodore Roethke, Mary Oliver, W. S. Merwin, Gary Snyder, and Denise Levertov. I also regularly review new books of American poetry for ISLE: Interdisciplinary Studies in Literature and Environment. My most recent review is Mary Oliver’s book of poetry Thirst. Four years of work with co-editors Laird Christensen and Fred Waage has resulted in the publication of Teaching North American Environmental Literature (MLA 2008). Our book provides a center of access to the range of pedagogical possibilities for teaching environmental literature. The collection includes over thirty contributors and features essays on the environmental literatures of Canada as well as Mexican and Mexican-American environmental literature. The book includes a section for further reading, “Resources for Teaching Environmental Literature: A Selective Guide.” Before my last promotion I published the essay “Education and Environmental Literacy: Teaching Ecocomposition in Keene State College’s Environmental House” that appeared in Ecocomposition: Theoretical and Pedagogical Approaches. This publication has led to a series of publications on environmental writers and ecocriticism. In 2004 I published a 12,000 word entry on John McPhee in Dictionary of Literary Biography: Twentieth-Century Nature Writers: Prose. My essay “Ecocriticism and the Practice of Reading” appeared in the fall of 2006 special issue of the journal Reader: Essays in Reader-Oriented Theory, Criticism, and Pedagogy that I guest edited. Reader is a semiannual publication that generates discussion on reader-response theory, criticism, and pedagogy. My essay, and the special issue, is focused around the relationship between reading and ecological thinking.

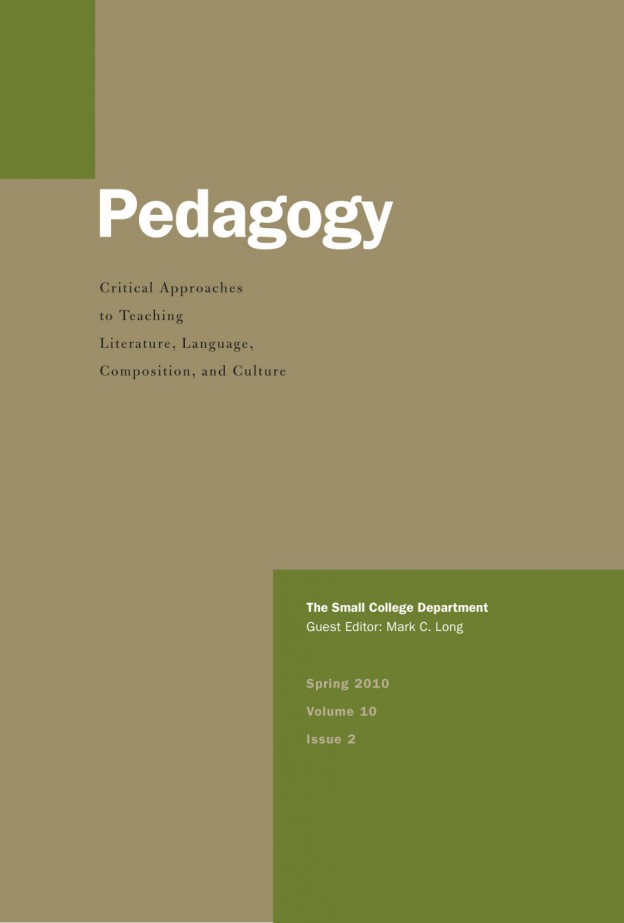

Four years of work with co-editors Laird Christensen and Fred Waage has resulted in the publication of Teaching North American Environmental Literature (MLA 2008). Our book provides a center of access to the range of pedagogical possibilities for teaching environmental literature. The collection includes over thirty contributors and features essays on the environmental literatures of Canada as well as Mexican and Mexican-American environmental literature. The book includes a section for further reading, “Resources for Teaching Environmental Literature: A Selective Guide.” Before my last promotion I published the essay “Education and Environmental Literacy: Teaching Ecocomposition in Keene State College’s Environmental House” that appeared in Ecocomposition: Theoretical and Pedagogical Approaches. This publication has led to a series of publications on environmental writers and ecocriticism. In 2004 I published a 12,000 word entry on John McPhee in Dictionary of Literary Biography: Twentieth-Century Nature Writers: Prose. My essay “Ecocriticism and the Practice of Reading” appeared in the fall of 2006 special issue of the journal Reader: Essays in Reader-Oriented Theory, Criticism, and Pedagogy that I guest edited. Reader is a semiannual publication that generates discussion on reader-response theory, criticism, and pedagogy. My essay, and the special issue, is focused around the relationship between reading and ecological thinking. For over ten years I have been writing about the profession of English. As a graduate student, while co-directing the Expository Writing Program at the University of Washington, I co-wrote and published the essay “Graduate Students, Professional Development Programs, and the Future(s) of English Studies” in the journal WPA: Writing Program Administration. Since arriving at Keene State College, my work in this area has focused on the intellectual work of English in particular institutional sites. In 2004, a Keene State College Faculty Development Pool Grant enabled me to travel to the Association of the Departments of English (ADE) Summer Seminar to broaden my perspective as a scholar interested in the profession of English. Moreover, serving for three years as a member (and for one year as Chair) of the MLA Committee on Academic Freedom and Professional Rights and Responsibilities (CAFPRR) furthered my understanding of the general conditions of the field of English studies and the professional lives of teachers and scholars. My subsequent inquiry into graduate training, the professional identity of faculty, and the small college department has been disseminated in a series of publications, book reviews and conference presentations. In 2005 I was invited to write a featured “Commentary” on the small college department, that I titled “Where Do You Teach?”, for the fall 2005 issue of the journal Pedagogy: Critical Approaches to Teaching Literature, Language, Composition, and Culture. And my essay “Reading, Writing and Teaching in Context,” appeared in the book Academic Cultures: Professional Preparation and the Teaching Life (MLA 2008). This essay takes as its subject the representation of faculty work in terms of research and teaching as separate activities. My argument is that this pervasive subplot in the narrative of the profession is rooted in a representation of faculty work that transcends the local institution and the ways that departments and institutions define intellectual work.

For over ten years I have been writing about the profession of English. As a graduate student, while co-directing the Expository Writing Program at the University of Washington, I co-wrote and published the essay “Graduate Students, Professional Development Programs, and the Future(s) of English Studies” in the journal WPA: Writing Program Administration. Since arriving at Keene State College, my work in this area has focused on the intellectual work of English in particular institutional sites. In 2004, a Keene State College Faculty Development Pool Grant enabled me to travel to the Association of the Departments of English (ADE) Summer Seminar to broaden my perspective as a scholar interested in the profession of English. Moreover, serving for three years as a member (and for one year as Chair) of the MLA Committee on Academic Freedom and Professional Rights and Responsibilities (CAFPRR) furthered my understanding of the general conditions of the field of English studies and the professional lives of teachers and scholars. My subsequent inquiry into graduate training, the professional identity of faculty, and the small college department has been disseminated in a series of publications, book reviews and conference presentations. In 2005 I was invited to write a featured “Commentary” on the small college department, that I titled “Where Do You Teach?”, for the fall 2005 issue of the journal Pedagogy: Critical Approaches to Teaching Literature, Language, Composition, and Culture. And my essay “Reading, Writing and Teaching in Context,” appeared in the book Academic Cultures: Professional Preparation and the Teaching Life (MLA 2008). This essay takes as its subject the representation of faculty work in terms of research and teaching as separate activities. My argument is that this pervasive subplot in the narrative of the profession is rooted in a representation of faculty work that transcends the local institution and the ways that departments and institutions define intellectual work.